In the executive suites of the DACH region, initial fascination with generative AI is increasingly giving way to pragmatic sobriety. This is good news. Because as long as AI is viewed as a magic black box, it remains an incalculable risk. However, once executives understand the mechanistic principles behind the models, the technology transforms from an opaque risk factor into a precisely controllable high-performance instrument.

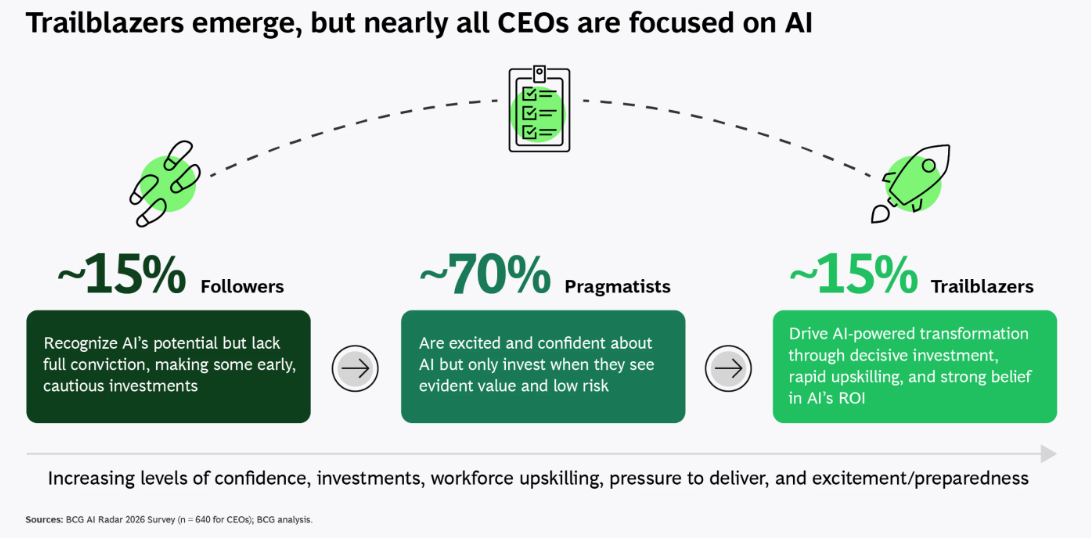

The current mood in executive circles is ambivalent. On one hand, nearly 80 percent of companies recognize generative AI as a decisive factor for their future competitiveness. On the other hand, one-third of Swiss executives feel overwhelmed when dealing with the technology, as the "AI Marketing Executive Pulse 2025" from the University of St. Gallen reveals.

This uncertainty is understandable but unnecessary. It often results from the misconception that one must "believe" in AI, rather than understanding it for what it is: a statistical tool whose output follows probabilities – not human logic.